Spock II: Databases and Sessions!

Last week we learned the basics of the the Spock library. We saw how to set up some simple routes. Like Servant, there's a bit of dependent-type machinery with routing. But we didn't need to learn any complex operators. We just needed to match up the number of arguments to our routes. We also saw how to use an application state to persist some data between requests.

This week, we'll add a couple more complex features to our Spock application. First we'll connect to a database. Second, we'll use sessions to keep track of users.

For some more examples of useful Haskell libraries, check out our Production Checklist!

Adding a Database

Last week, we added some global application state. Even with this improvement, our vistor count doesn't persist. When we reset the server, everything goes away, and our users will see a different number. We can change this by adding a database connection to our server. We'll follow the Spock tutorial example and connect to an SQLite database by using Persistent.

If you haven't used Persistent before, take a look at this tutorial in our Haskell Web series! You can also look at our sample code on Github for any of the boilerplate you might be missing. Here's the super simple schema we'll use. Remember that Persistent will give us an auto-incrementing primary key.

share [mkPersist sqlSettings, mkMigrate "migrateAll"] [persistLowerCase|

NameEntry json

name Text

deriving Show

|]Spock expects us to use a pool of connections to our database when we use it. So let's create one to an SQLite file using createSqlitePool. We need to run this from a logging monad. While we're at it, we can migrate our database from the main startup function. This ensures we're using an up-to-date schema:

import Database.Persist.Sqlite (createSqlitePool)

...

main :: IO ()

main = do

ref <- newIORef M.empty

pool <- runStdoutLoggingT $ createSqlitePool "spock_example.db" 5

runStdoutLoggingT $ runSqlPool (runMigration migrateAll) pool

...Now that we've created this pool, we can pass that to our configuration. We'll use the PCPool constructor. We're now using an SQLBackend for our server, so we'll also have to change the type of our router to reflect this:

main :: IO ()

main = do

…

spockConfig <-

defaultSpockCfg EmptySession (PCPool pool) (AppState ref)

runSpock 8080 (spock spockConfig app)

app :: SpockM SqlBackend MySession AppState ()

app = ...Now we want to update our route action to access the database instead of this map. But first, we'll write a helper function that will allow us to call any SQL action from within our SpockM monad. It looks like this:

runSQL :: (HasSpock m, SpockConn m ~ SqlBackend)

=> SqlPersistT (LoggingT IO) a -> m a

runSQL action = runQuery $ \conn ->

runStdoutLoggingT $ runSqlConn action connAt the core of this is the runQuery function from the Spock library. It works since our router now uses SpockM SqlBackend instead of SpockM (). Now let's write a couple SQL actions we can use. We'll have one performing a lookup by name, and returning the Key of the first entry that matches, if one exists. Then we'll also have one that will insert a new name and return its key.

fetchByName

:: T.Text

-> SqlPersistT (LoggingT IO) (Maybe Int64)

fetchByName name = (fmap (fromSqlKey . entityKey)) <$>

(listToMaybe <$> selectList [NameEntryName ==. name] [])

insertAndReturnKey

:: T.Text

-> SqlPersistT (LoggingT IO) Int64

insertAndReturnKey name = fromSqlKey <$> insert (NameEntry name)Now we can use these functions instead of our map!

app :: SpockM SqlBackend MySession AppState ()

app = do

get root $ text "Hello World!"

get ("hello" <//> var) $ \name -> do

existingKeyMaybe <- runSQL $ fetchByName name

visitorNumber <- case existingKeyMaybe of

Nothing -> runSQL $ insertAndReturnKey name

Just i -> return i

text ("Hello " <> name <> ", you are visitor number " <>

T.pack (show visitorNumber))And voila! We can shutdown our server between runs, and we'll preserve the visitors we've seen!

Tracking Users

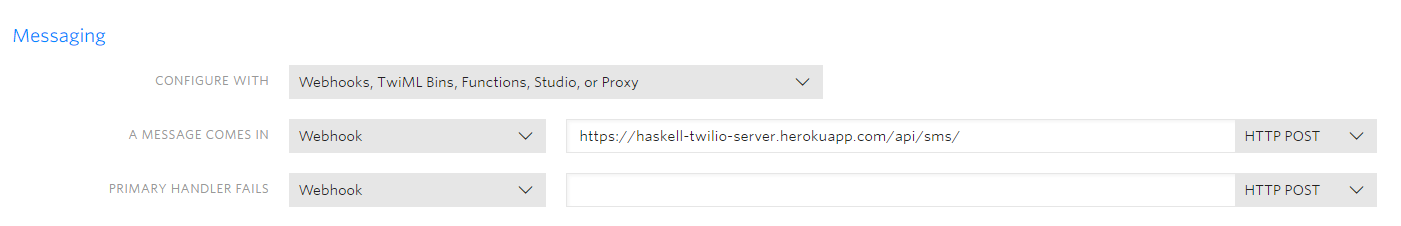

Now, using a route to identify our users isn't what we want to do. Anyone can visit any route after all! So for the last modification to the server, we're going to add a small "login" functionality. We'll use the App's session to track what user is currently visiting. Our new flow will look like this:

- We'll change our entry route to

/hello. - If the user visits this, we'll show a field allowing them to enter their name and log in.

- Pressing the login button will send a post request to our server. This will update the session to match the session ID with the username.

- It will then send the user to the

/homepage, which will greet them and present a logout button. - If they log out, we'll clear the session.

Note that using the session is different from using the app state map that we had in the first part. We share the app state across everyone who uses our server. But the session will contain user-specific references.

Adding a Session

The first step is to change our session type. Once again, we'll use a IORef wrapper around a map. This time though, we'll use a simple type synonym to simplify things. Here's our type definition and the updated main function.

type MySession = IORef (M.Map T.Text T.Text)

main :: IO ()

main = do

ref <- newIORef M.empty

-- Initialize a reference for the session

sessionRef <- newIORef M.empty

pool <- runStdoutLoggingT $ createSqlitePool "spock_example.db" 5

runStdoutLoggingT $ runSqlPool (runMigration migrateAll) pool

-- Pass that reference!

spockConfig <-

defaultSpockCfg sessionRef (PCPool pool) (AppState ref)

runSpock 8080 (spock spockConfig app)Updating the Hello Page

Now let's update our "Hello" page. Check out the appendix below for what our helloHTML looks like. It's a "login" form with a username field and a submit button.

-- Notice we use MySession!

app :: SpockM SqlBackend MySession AppState ()

app = do

get root $ text "Hello World!"

get "hello" $ html helloHTML

...Now we need to add a handler for the post request to /hello. We'll use the post function instead of get. Now instead of our action taking an argument, we'll extract the post body using the body function. If our application were more complicated, we would want to use a proper library for Form URL encoding and decoding. But for this small example, we'll use a simple helper decodeUsername. You can view this helper in the appendix.

app :: SpockM SqlBackend MySession AppState ()

app = do

…

post "hello" $ do

nameEntry <- decodeUsername <$> body

...Now we want to save this user using our session and then redirect them to the home page. First we'll need to get the session ID and the session itself. We use the functions getSessionId and readSession for this. Then we'll want to update our session by associating the name with the session ID. Finally, we'll redirect to home.

post "hello" $ do

nameEntry <- decodeUsername <$> body

sessId <- getSessionId

currentSessionRef <- readSession

liftIO $ modifyIORef' currentSessionRef $

M.insert sessId (nameEntryName nameEntry)

redirect "home"The Home Page

Now on the home page, we'll want to check if we've got a user associated with the session ID. If we do, we'll display some text greeting that user (and also display a logout button). Again, we need to invoke getSessionId and readSession. If we have no user associated with the session, we'll bounce them back to the hello page.

get "home" $ do

sessId <- getSessionId

currentSessionRef <- readSession

currentSession <- liftIO $ readIORef currentSessionRef

case M.lookup sessId currentSession of

Nothing -> redirect "hello"

Just name -> html $ homeHTML nameThe last piece of functionality we need is to "logout". We'll follow the familiar pattern of getting the session ID and session. This time, we'll change the session by clearing the session key. Then we'll redirect the user back to the hello page.

post "logout" $ do

sessId <- getSessionId

currentSessionRef <- readSession

liftIO $ modifyIORef' currentSessionRef $ M.delete sessId

redirect "hello"And now our site tracks our users' sessions! We can access the same page as a different user on different sessions!

Conclusion

This wraps up our exploration of the Spock library! We've done a shallow but wide look at some of the different features Spock has to offer. We saw several different ways to persist information across requests on our server! Connecting to a database is the most important. But using the session is a pretty advanced feature that is quite easy in Spock!

For some more cool examples of Haskell web libraries, take a look at our Web Skills Series! You can also download our Production Checklist for even more ideas!

Appendix - HTML Fragments and Helpers

helloHTML :: T.Text

helloHTML =

"<html>\

\<body>\

\<p>Hello! Please enter your username!\

\<form action=\"/hello\" method=\"post\">\

\Username: <input type=\"text\" name=\"username\"><br>\

\<input type=\"submit\"><br>\

\</form>\

\</body>\

\</html>"

homeHTML :: T.Text -> T.Text

homeHTML name =

"<html><body><p>Hello " <> name <>

"</p>\

\<form action=\"logout\" method=\"post\">\

\<input type=\"submit\" name=\"logout_button\"<br>\

\</form>\

\</body>\

\</html>"

-- Note: 61 -> '=' in ASCII

-- We expect input like "username=christopher"

parseUsername :: B.ByteString -> T.Text

parseUsername input = decodeUtf8 $ B.drop 1 tail_

where

tail_ = B.dropWhile (/= 61) inputSimple Web Routing with Spock!

In our Haskell Web Series, we go over the basics of how we can build a web application with Haskell. That includes using Persistent for our database layer, and Servant for our HTTP layer. But these aren't the only libraries for those tasks in the Haskell ecosystem.

We've already looked at how to use Beam as another potential database library. In these next two articles, we'll examine Spock, another HTTP library. We'll compare it to Servant and see what the different design decisions are. We'll start this week by looking at the basics of routing. We'll also see how to use a global application state to coordinate information on our server. Next week, we'll see how to hook up a database and use sessions.

For some useful libraries, make sure to download our Production Checklist. It will give you some more ideas for libraries you can use even beyond these! Also, you can follow along the code here by looking at our Github repository!

Getting Started

Spock gives us a helpful starting point for making a basic server. We'll begin by taking a look at the starter code on their homepage. Here's our initial adaptation of it:

data MySession = EmptySession

data MyAppState = DummyAppState (IORef Int)

main :: IO ()

main = do

ref <- newIORef 0

spockConfig <- defaultSpockCfg EmptySession PCNoDatabase (DummyAppState ref)

runSpock 8080 (spock spockConfig app)

app :: SpockM () MySession MyAppState ()

app = do

get root $ text "Hello World!"

get ("hello" <//> var) $ \name -> do

(DummyAppState ref) <- getState

visitorNumber <- liftIO $ atomicModifyIORef' ref $ \i -> (i+1, i+1)

text ("Hello " <> name <> ", you are visitor number " <> T.pack (show visitorNumber))In our main function, we initialize an IO ref that we'll use as the only "state" of our application. Then we'll create a configuration object for our server. Last, we'll run our server using our app specification of the actual routes.

The configuration has a few important fields attached to it. For now, we're using dummy values for all these. Our config wants a Session, which we've defined as EmptySession. It also wants some kind of a database, which we'll add later. Finally, it includes an application state, and for now we'll only supply our pointer to an integer. We'll see later how we can add a bit more flavor to each of these parameters. But for the moment, let's dig a bit deeper into the app expression that defines the routing for our Server.

The SpockM Monad

Our router lives in the SpockM monad. We can see this has three different type parameters. Remember the defaultSpockConfig had three comparable arguments! We have the empty session as MySession and the IORef app state as MyAppState. Finally, there's an extra () parameter corresponding to our empty database. (The return value of our router is also ()).

Now each element of this monad is a path component. These path components use HTTP verbs, as you might expect. At the moment, our router only has a couple get routes. The first lies at the root of our path, and outputs Hello World!. The second lies at hello/{name}. It will print a message specifying the input name while keeping track of how many visitors we've had.

Composing Routes

Now let's talk a little bit now about the structure of our router code. The SpockM monad works like a Writer monad. Each action we take adds a new route to the application. In this case, we take two actions, each responding to get requests (we'll see an example of a post request next week).

For any of our HTTP verbs, the first argument will be a representation of the path. On our first route, we use the hard-coded root expression to refer to the / path. For our second expression, we have a couple different components that we combine with <//>.

First, we have a string path component hello. We could combine other strings as well. Let's suppose we wanted the route /api/hello/world. We'd use the expression:

"api" <//> "hello" <//> "world"In our original code though, the second part of the path is a var. This allows us to substitute information into the path. When we visit /hello/james, we'll be able to get the path component james as a variable. Spock passes this argument to the function we have as the second argument of the get combinator.

This argument has a rather complicated type RouteSpec. We don't need to go into the details here. But the simplest thing we can return is some raw text by using the text combinator. (We could also use html if we have our own template). We conclude both our route definitions by doing this.

Notice that the expression for our first route has no parameters, while the second has one parameter. As you might guess, the parameter in the second route refers to the variable we can pull out of the path thanks to var. We have the same number of var elements in the path as we do arguments to the function. Spock uses dependent types to ensure these match.

Using the App State

Now that we know the basics, let's start using some of Spock's more advanced features. This week, we'll see how to use the App State.

Currently, we bump the visitor count each time we visit the route with a name, even if that name is the same. So visiting /hello/michael the first time results in:

Hello michael, you are visitor number 1Then we'll visit again and see:

Hello michael, you are visitor number 2Instead, let's make it so we assign each name to a particular number. This way, when a user visits the same route again, they'll see what number they originally were.

Making this change is rather easy. Instead of using an IORef on an Int for our state, we'll use a mapping from Text to Int:

data AppState = AppState (IORef (M.Map Text Int))Now we'll initialize our ref with an empty map and pass it to our config:

main :: IO ()

main = do

ref <- newIORef M.empty

spockConfig <- defaultSpockCfg EmptySession PCNoDatabase (AppState ref)

runSpock 8080 (spock spockConfig app)And for our hello/{name} route, we'll update it to follow this process:

- Get the map reference

- See if we have an entry for this user yet.

- If not, insert them with the length of the map, and write this back to our

IORef - Return the message

This process is pretty straightforward. Let's see what it looks like:

app :: SpockM () MySession AppState ()

app = do

get root $ text "Hello World!"

get ("hello" <//> var) $ \name -> do

(AppState mapRef) <- getState

visitorNumber <- liftIO $ atomicModifyIORef' mapRef $ updateMapWithName name

text ("Hello " <> name <> ", you are visitor number " <> T.pack (show visitorNumber))

updateMapWithName :: T.Text -> M.Map T.Text Int -> (M.Map T.Text Int, Int)

updateMapWithName name nameMap = case M.lookup name nameMap of

Nothing -> (M.insert name (mapSize + 1) nameMap, mapSize + 1)

Just i -> (nameMap, i)

where

mapSize = M.size nameMapWe create a function to update the map every time our app encounters a new name. The we update our IORef with atomicModifyIORef. And now if we visit /hello/michael twice in a row, we'll get the same output both times!

Conclusion

That's as far as we'll go this week! We covered the basics of how to make a basic application in Spock. We saw the basics of composing routes. Then we saw how we could use the app state to keep track of information across requests. Next week, we'll improve this process by adding a database to our application. We'll also use sessions to keep track of users.

For more cool libraries, read up on our Haskell Web Series. Also, you can download our Production Checklist for more ideas!

Keeping it Clean: Haskell Code Formatters

A long time ago, we had an article that featured some tips on how to organize your import statements. As far as I remember, that’s the only piece we’ve ever done on code formatting. But as you work on projects with more people, formatting is one thing you need to consider to keep everyone sane. You’d like to have a consistent style across the code base. That way, there’s less controversy in code reviews, and people don't need to think as much to update code. They shouldn't have to wonder about following the style guide or what exists in a fragment of code.

This week, we’re going to go over three different Haskell code formatting tools. We’ll examine Stylish Haskell, Hindent, and Brittany. These all have their pluses and minuses, as we’ll see.

For some ideas of what Haskell projects you can do, download our Production Checklist. You can also take our free Stack mini-course and learn how to use Stack to organize your code!

Stylish Haskell

The first tool we’ll look at is Stylish Haskell. This is a straightforward tool to use, as it does some cool things with no configuration required. Let’s take a look at a poorly formatted version of code from our Beam article.

{-# LANGUAGE DeriveGeneric #-}

{-# LANGUAGE FlexibleContexts #-}

{-# LANGUAGE FlexibleInstances #-}

{-# LANGUAGE GADTs #-}

{-# LANGUAGE MultiParamTypeClasses #-}

{-# LANGUAGE OverloadedStrings #-}

{-# LANGUAGE StandaloneDeriving #-}

{-# LANGUAGE TypeApplications #-}

{-# LANGUAGE TypeFamilies #-}

{-# LANGUAGE TypeSynonymInstances #-}

{-# LANGUAGE ImpredicativeTypes #-}

module Schema where

import Database.Beam

import Database.Beam.Backend

import Database.Beam.Migrate

import Database.Beam.Sqlite

import Database.SQLite.Simple (open, Connection)

import Data.Int (Int64)

import Data.Text (Text)

import Data.Time (UTCTime)

import qualified Data.UUID as U

data UserT f = User

{ _userId :: Columnar f Int64

, _userName :: Columnar f Text

, _userEmail :: Columnar f Text

, _userAge :: Columnar f Int

, _userOccupation :: Columnar f Text

} deriving (Generic)There are many undesirable things here. Our language pragmas don’t line up their end braces. They also aren’t in any discernible order. Our imports are also not lined up, and neither are the fields in our data types.

Stylish Haskell can fix all this. First, we’ll install it globally with:

stack install stylish-haskell(You can also use cabal instead of stack). Then we can call the stylish-haskell command on a file. By default, it will output the results to the terminal. But if we pass the -i flag, it will update the file in place. This will make all the changes we want to line up the various statements in our file!

>> stylish-haskell -i Schema.hs

--- Result:

{-# LANGUAGE DeriveGeneric #-}

{-# LANGUAGE FlexibleContexts #-}

{-# LANGUAGE FlexibleInstances #-}

{-# LANGUAGE GADTs #-}

{-# LANGUAGE ImpredicativeTypes #-}

{-# LANGUAGE MultiParamTypeClasses #-}

{-# LANGUAGE OverloadedStrings #-}

{-# LANGUAGE StandaloneDeriving #-}

{-# LANGUAGE TypeApplications #-}

{-# LANGUAGE TypeFamilies #-}

{-# LANGUAGE TypeSynonymInstances #-}

module Schema where

import Database.Beam

import Database.Beam.Backend

import Database.Beam.Migrate

import Database.Beam.Sqlite

import Database.SQLite.Simple (Connection, open)

import Data.Int (Int64)

import Data.Text (Text)

import Data.Time (UTCTime)

import qualified Data.UUID as U

data UserT f = User

{ _userId :: Columnar f Int64

, _userName :: Columnar f Text

, _userEmail :: Columnar f Text

, _userAge :: Columnar f Int

, _userOccupation :: Columnar f Text

} deriving (Generic)Stylish Haskell integrates well with most common editors. For instance, if you use Vim, you can also run the command from within the editor with the command:

:%!stylish-haskellWe get all these features without any configuration. If we want to change things though, we can create a configuration file. We’ll make a default file with the following command:

stylish-haskell --defaults > .stylish-haskell.yamlThen if we want, we can modify it a bit. For one example, we've aligned our imports above globally. This means they all leave space for qualified. But we can decide we don’t want a group of imports to have that space if there are no qualified imports. There’s a setting for this in the config. By default, it looks like this:

imports:

align: globalWe can change it to group to ensure our imports are only aligned within their grouping.

imports:

align: groupAnd now when we run the command, we’ll get a different result:

module Schema where

import Database.Beam

import Database.Beam.Backend

import Database.Beam.Migrate

import Database.Beam.Sqlite

import Database.SQLite.Simple (Connection, open)

import Data.Int (Int64)

import Data.Text (Text)

import Data.Time (UTCTime)

import qualified Data.UUID as USo in short, Stylish Haskell is a great tool for a limited scope. It has uncontroversial suggestions for several areas like imports and pragmas. It also removes trailing whitespace, and adjusts case statements sensibly. That said, it doesn’t affect your main Haskell code. Let’s look at a couple tools that can do that.

Hindent

Another program we can use is hindent. As its name implies, it deals with updating whitespace and indentation levels. Let’s look at a very simple example. Consider this code, adapted from our Beam article:

user1' = User default_ (val_ "James") (val_ "james@example.com") (val_ 25) (val_ "programmer")

findUsers :: Connection -> IO ()

findUsers conn = runBeamSqlite conn $ do

users <- runSelectReturningList $ select $ do

user <- (all_ (_blogUsers blogDb))

article <- (all_ (_blogArticles blogDb))

guard_ (user ^. userName ==. (val_ "James"))

guard_ (article ^. articleUserId ==. user ^. userId)

return (user, article)

mapM_ (liftIO . putStrLn . show) usersThere are a few things we could change. First, we might want to update the indentation level so that it is 2 instead of 4. Second, let's restrict the line size to only being 80. When we run hindent on this file, it’ll make the changes.

user1' =

User

default_

(val_ "James")

(val_ "james@example.com")

(val_ 25)

(val_ "programmer")

findUsers :: Connection -> IO ()

findUsers conn =

runBeamSqlite conn $ do

users <-

runSelectReturningList $

select $ do

user <- (all_ (_blogUsers blogDb))

article <- (all_ (_blogArticles blogDb))

guard_ (user ^. userName ==. (val_ "James"))

guard_ (article ^. articleUserId ==. user ^. userId)

return (user, article)

mapM_ (liftIO . putStrLn . show) usersHindent is also configurable. We can create a file .hindent.yaml. By default, we would have the following configuration:

indent-size: 2

line-length: 80

force-trailing-newline: trueBut then we can change it if we want so that the indentation level is 3:

indent-size: 3And now when we run it, we’ll actually see that it’s changed to reflect that:

findUsers :: Connection -> IO ()

findUsers conn =

runBeamSqlite conn $ do

users <-

runSelectReturningList $

select $ do

user <- (all_ (_blogUsers blogDb))

article <- (all_ (_blogArticles blogDb))

guard_ (user ^. userName ==. (val_ "James"))

guard_ (article ^. articleUserId ==. user ^. userId)

return (user, article)

mapM_ (liftIO . putStrLn . show) usersHindent also has some other effects that, as far as I can tell, are not configurable. You can see that the separation of lines was not preserved above. In another example, it spaced out instance definitions that I had grouped in another file:

-- BEFORE

deriving instance Show User

deriving instance Eq User

deriving instance Show UserId

deriving instance Eq UserId

-- AFTER

deriving instance Show User

deriving instance Eq User

deriving instance Show UserId

deriving instance Eq UserIdSo make sure you’re aware of everything it does before committing to using it. Like stylish-haskell, hindent integrates well with text editors.

Brittany

Brittany is an alternative to Hindent for modifying your expression definitions. It mainly focuses on the use of horizontal space throughout your code. As far as I see, it doesn’t line up language pragmas or change import statements in the way stylish-haskell does. It also doesn’t touch data type declarations. Instead, it seeks to reformat your code to make maximal use of space while avoiding lines that are too long. As an example, we could look at this line from our Beam example:

insertArticles :: Connection -> IO ()

insertArticles conn = runBeamSqlite conn $ runInsert $

insert (_blogArticles blogDb) $ insertValues articlesOur decision on where to separate the line is a little bit arbitrary. But at the very least we don’t try to cram it all on one line. But if we have either the approach above or the one-line version, Brittany will change it to this:

brittany --write-mode=inplace MyModule.hs

--

insertArticles :: Connection -> IO ()

insertArticles conn =

runBeamSqlite conn $ runInsert $ insert (_blogArticles blogDb) $ insertValues

articlesThis makes “better” use of horizontal space in the sense that we get as much on the first line. That said, one could argue that the first approach we have actually looks nicer. Brittany can also change type signatures that overflow the line limit. Suppose we have this arbitrary type signature that’s too long for a single line:

myReallyLongFunction :: State ComplexType Double -> Maybe Double -> Either Double ComplexType -> IO a -> StateT ComplexType IO aBrittany will fix it up so that each argument type is on a single line:

myReallyLongFunction

:: State ComplexType Double

-> Maybe Double

-> Either Double ComplexType

-> IO a

-> StateT ComplexType IO aThis can be useful in projects with very complicated types. The structure makes it easier for you to add Haddock comments to the various arguments.

Dangers

There is of course, a (small) danger to using tools like these. If you’re going to use them, you want to ensure everyone on the project is using them. Suppose person A isn’t using the program, and commits code that isn’t formatted by the program. Person B might then look through that code, and their editor will correct the file. This will leave them with local changes to the file that aren’t relevant to whatever work they’re doing. This can cause a lot of confusion when they submit code for review. Whoever reviews their code has to sift through the format changes, which slows the review.

People can also have (unreasonably) strong opinions about code formatting. So it’s generally something you want to nail down early on a project and avoid changing afterward. With the examples in this article, I would say it would be an easy sell to use Stylish Haskell on a project. However, the specific choices made in H-Indent and Brittany can be more controversial. So it might cause more problems than it would solve to institute those project-wide.

Conclusion

It’s possible to lose a surprising amount of productivity to code formatting. So it can be important to nail down standards early and often. Code formatting programs can make it easy to enforce particular standards. They’re also very simple to incorporate into your projects with stack and your editor of choice!

Now that you know how to format your code, need some suggestions for what to work on next? Take a look at our Production Checklist! It’ll give you some cool ideas of libraries you can use for building Haskell web apps and much more!

Beam: Database Power without Template Haskell!

As part of our Haskell Web Series, we examined the Persistent and Esqueleto libraries. The first of these allows you to create a database schema in a special syntax. You can then use Template Haskell to generate all the necessary Haskell data types and instances for your types. Even better, you can write Haskell code to query on these that resembles SQL. These queries are type-safe, which is awesome. However, the need to specify our schema with template Haskell presented some drawbacks. For instance, the code takes longer to compile and is less approachable for beginners.

This week on the blog, we'll be exploring another database library called Beam. This library allows us to specify our database schema without using Template Haskell. There's some boilerplate involved, but it's not bad at all! Like Persistent, Beam has support for many backends, such as SQLite and PostgresQL. Unlike Persistent, Beam also supports join queries as a built-in part of its system.

For some more ideas on advanced libraries, be sure to check out our Production Checklist! It includes a couple more different database options to look at.

Specifying our Types

As a first note, while Beam doesn't require Template Haskell, it does need a lot of other compiler extensions. You can look at those in the appendix below, or else take a look at the example code on Github. Now let's think back to how we specified our schema when using Persistent:

import qualified Database.Persist.TH as PTH

PTH.share [PTH.mkPersist PTH.sqlSettings, PTH.mkMigrate "migrateAll"] [PTH.persistLowerCase|

User sql=users

name Text

email Text

age Int

occupation Text

UniqueEmail email

deriving Show Read Eq

Article sql=articles

title Text

body Text

publishedTime UTCTime

authorId UserId

UniqueTitle title

deriving Show Read EqWith Beam, we won't use Template Haskell, so we'll actually be creating normal Haskell data types. There will still be some oddities though. First, by convention, we'll specify our types with the extra character T at the end. This is unnecessary, but the convention helps us remember what types relate to tables. We'll also have to provide an extra type parameter f, that we'll get into a bit more later:

data UserT f =

…

data ArticleT f =

...Our next convention will be to use an underscore in front of our field names. We will also, unlike Persistent, specify the type name in the field names. With these conventions, I'm following the advice of the library's creator, Travis.

data UserT f =

{ _userId :: ...

, _userName :: …

, _userEmail :: …

, _userAge :: …

, _userOccupation :: …

}

data ArticleT f =

{ _articleId :: …

, _articleTitle :: …

, _articleBody :: …

, _articlePublishedTime :: …

}So when we specify the actual types of each field, we'll just put the relevant data type, like Int, Text or whatever, right? Well, not quite. To complete our types, we're going to fill in each field with the type we want, except specified via Columnar f. Also, we'll derive Generic on both of these types, which will allow Beam to work its magic:

data UserT f =

{ _userId :: Columnar f Int64

, _userName :: Columnar f Text

, _userEmail :: Columnar f Text

, _userAge :: Columnar f Int

, _userOccupation :: Columnar f Text

} deriving (Generic)

data ArticleT f =

{ _articleId :: Columnar f Int64

, _articleTitle :: Columnar f Text

, _articleBody :: Columnar f Text

, _articlePublishedTime :: Columnar f Int64 -- Unix Epoch

} deriving (Generic)Now there are a couple small differences between this and our previous schema. First, we have the primary key as an explicit field of our type. With Persistent, we separated it using the Entity abstraction. We'll see below how we can deal with situations where that key isn't known. The second difference is that (for now), we've left out the userId field on the article. We'll add this when we deal with primary keys.

Columar

So what exactly is this Columnar business about? Well under most circumstances, we'd like to specify a User with the raw field types. But there are some situations where we'll have to use a more complicated type for an SQL expression. Let's start with the simple case first.

Luckily, Columnar works in such a way that if we useIdentity for f, we can use raw types to fill in the field values. We'll make a type synonym specifically for this identity case. We can then make some examples:

type User = UserT Identity

type Article = ArticleT Identity

user1 :: User

user1 = User 1 "James" "james@example.com" 25 "programmer"

user2 :: User

user2 = User 2 "Katie" "katie@example.com " 25 "engineer"

users :: [User]

users = [ user1, user2 ]As a note, if you find it cumbersome to repeat the Columnar keyword, you can shorten it to C:

data UserT f =

{ _userId :: C f Int64

, _userName :: C f Text

, _userEmail :: C f Text

, _userAge :: C f Int

, _userOccupation :: C f Text

} deriving (Generic)Now, our initial examples will assign all our fields with raw values. So we won't initially need to use anything for the f parameter besides Identity. Further down though, we'll deal with the case of auto-incrementing primary keys. In this case, we'll use the default_ function, whose type is actually a Beam form of an SQL expression. In this case, we'll be using a different type for f, but the flexibility will allow us to keep using our User constructor!

Instances for Our Types

Now that we've specified our types, we can use the Beamable and Table type classes to tell Beam more about our types. Before we can make any of these types a Table, we'll want to assign its primary key type. So let's make a couple more type synonyms to represent these:

type UserId = PrimaryKey UserT Identity

type ArticleId = PrimaryKey ArticleT IdentityWhile we're at it, let's add that foreign key to our Article type:

data ArticleT f =

{ _articleId :: Columnar f Int64

, _articleTitle :: Columnar f Text

, _articleBody :: Columnar f Text

, _articlePublishedTime :: Columnar f Int64

, _articleUserId :: PrimaryKey UserT f

} deriving (Generic)We can now generate instances for Beamable both on our main types and on the primary key types. We'll also derive instances for Show and Eq:

data UserT f =

…

deriving instance Show User

deriving instance Eq User

instance Beamable UserT

instance Beamable (PrimaryKey UserT)

data ArticleT f =

…

deriving instance Show Article

deriving instance Eq Article

instance Beamable ArticleT

instance Beamable (PrimaryKey ArticleT)Now we'll create an instance for the Table class. This will involve some type family syntax. We'll specify UserId and ArticleId as our primary key data types. Then we can fill in the primaryKey function to match up the right field.

instance Table UserT where

data PrimaryKey UserT f = UserId (Columnar f Int64) deriving Generic

primaryKey = UserId . _userId

instance Table ArticleT where

data PrimaryKey ArticleT f = ArticleId (Columnar f Int64) deriving Generic

primaryKey = ArticleId . _articleIdAccessor Lenses

We'll do one more thing to mimic Persistent. The Template Haskell automatically generated lenses for us. We could use those when making database queries. Below, we'll use something similar. But we'll use a special function, tableLenses, to make these rather than Template Haskell. If you remember back to how we used the Servant Client library, we could create client functions by using client and matching it against a pattern. We'll do something similar with tableLenses. We'll use LensFor on each field of our tables, and create a pattern constructing an item.

User

(LensFor userId)

(LensFor userName)

(LensFor userEmail)

(LensFor userAge)

(LensFor userOccupation) = tableLenses

Article

(LensFor articleId)

(LensFor articleTitle)

(LensFor articleBody)

(LensFor articlePublishedTime)

(UserId (LensFor articuleUserId)) = tableLensesNote we have to wrap the foreign key lens in UserId.

Creating our Database

Now unlike Persistent, we'll create an extra type that will represent our database. Each of our two tables will have a field within this database:

data BlogDB f = BlogDB

{ _blogUsers :: f (TableEntity UserT)

, _blogArticles :: f (TableEntity ArticleT)

} deriving (Generic)We'll need to make our database type an instance of the Database class. We'll also specify a set of default settings we can use on our database. Both of these items will involve a parameter be, which stands for a backend, (e.g. SQLite, Postgres). We leave this parameter generic for now.

instance Database be BlogDB

blogDb :: DatabaseSettings be BlogDB

blogDb = defaultDbSettingsInserting into Our Database

Now, migrating our database with Beam is a little more complicated than it is with Persistent. We might cover that in a later article. For now, we'll keep things simple, and use an SQLite database and migrate it ourselves. So let's first create our tables. We have to follow Beam's conventions here, particularly on the user_id__id field for our foreign key:

CREATE TABLE users \

( id INTEGER PRIMARY KEY AUTOINCREMENT\

, name VARCHAR NOT NULL \

, email VARCHAR NOT NULL \

, age INTEGER NOT NULL \

, occupation VARCHAR NOT NULL \

);

CREATE TABLE articles \

( id INTEGER PRIMARY KEY AUTOINCREMENT \

, title VARCHAR NOT NULL \

, body VARCHAR NOT NULL \

, published_time INTEGER NOT NULL \

, user_id__id INTEGER NOT NULL \

);Now we want to write a couple queries that can interact with the database. Let's start by inserting our raw users. We begin by opening up an SQLite connection, and we'll write a function that uses this connection:

import Database.SQLite.Simple (open, Connection)

main :: IO ()

main = do

conn <- open "blogdb1.db"

insertUsers conn

insertUsers :: Connection -> IO ()

insertUsers = ...We start our expression by using runBeamSqlite and passing the connection. Then we use runInsert to specify to Beam that we wish to make an insert statement.

import Database.Beam

import Database.Beam.SQLite

insertUsers :: Connection -> IO ()

insertUsers conn = runBeamSqlite conn $ runInsert $

...Now we'll use the insert function and signal which one of our tables we want out of our database:

insertUsers :: Connection -> IO ()

insertUsers conn = runBeamSqlite conn $ runInsert $

insert (_blogUsers blogDb) $ ...Last, since we are inserting raw values (UserT Identity), we use the insertValues function to complete this call:

insertUsers :: Connection -> IO ()

insertUsers conn = runBeamSqlite conn $ runInsert $

insert (_blogUsers blogDb) $ insertValues usersAnd now we can check and verify that our users exist!

SELECT * FROM users;

1|James|james@example.com|25|programmer

2|Katie|katie@example.com|25|engineerLet's do the same for articles. We'll use the pk function to access the primary key of a particular User:

article1 :: Article

article1 = Article 1 "First article"

"A great article" 1531193221 (pk user1)

article2 :: Article

article2 = Article 2 "Second article"

"A better article" 1531199221 (pk user2)

article3 :: Article

article3 = Article 3 "Third article"

"The best article" 1531200221 (pk user1)

articles :: [Article]

articles = [ article1, article2, article3]

insertArticles :: Connection -> IO ()

insertArticles conn = runBeamSqlite conn $ runInsert $

insert (_blogArticles blogDb) $ insertValues articlesSelect Queries

Now that we've inserted a couple elements, let's run some basic select statements. In general for select, we'll want the runSelectReturningList function. We could also query for a single element with a different function if we wanted:

findUsers :: Connection -> IO ()

findUsers conn = runBeamSqlite conn $ do

users <- runSelectReturningList $ ...Now we'll use select instead of insert from the last query. We'll also use the function all_ on our users field in the database to signify that we want them all. And that's all we need!:

findUsers :: Connection -> IO ()

findUsers conn = runBeamSqlite conn $ do

users <- runSelectReturningList $ select (all_ (_blogUsers blogDb))

mapM_ (liftIO . putStrLn . show) usersTo do a filtered query, we'll start with the same framework. But now we need to enhance our select statement into a monadic expression. We'll start by selecting user from all our users:

findUsers :: Connection -> IO ()

findUsers conn = runBeamSqlite conn $ do

users <- runSelectReturningList $ select $ do

user <- (all_ (_blogUsers blogDb))

...

mapM_ (liftIO . putStrLn . show) usersAnd we'll now filter on that by using guard_ and applying one of our lenses. We use a ==. operator for equality like in Persistent. We also have to wrap our raw comparison value with val:

findUsers :: Connection -> IO ()

findUsers conn = runBeamSqlite conn $ do

users <- runSelectReturningList $ select $ do

user <- (all_ (_blogUsers blogDb))

guard_ (user ^. userName ==. (val_ "James"))

return user

mapM_ (liftIO . putStrLn . show) usersAnd that's all we need! Beam will generate the SQL for us! Now let's try to do a join. This is actually much simpler in Beam than with Persistent/Esqueleto. All we need is to add a couple more statements to our "select" on the articles. We'll just filter them by the user ID!

findUsersAndArticles :: Connection -> IO ()

findUsersAndArticles conn = runBeamSqlite conn $ do

users <- runSelectReturningList $ select $ do

user <- (all_ (_blogUsers blogDb))

guard_ (user ^. userName ==. (val_ "James"))

articles <- (all_ (_blogArticles blogDb))

guard_ (article ^. articleUserId ==. user ^. userId)

return user

mapM_ (liftIO . putStrLn . show) usersThat's all there is to it!

Auto Incrementing Primary Keys

In the examples above, we hard-coded all our IDs. But this isn't typically what you want. We should let the database assign the ID via some rule, in our case auto-incrementing. In this case, instead of creating a User "value", we'll make an "expression". This is possible through the polymorphic f parameter in our type. We'll leave off the type signature since it's a bit confusing. But here's the expression we'll create:

user1' = User

default_

(val_ "James")

(val_ "james@example.com")

(val_ 25)

(val_ "programmer")We use default_ to represent an expression that will tell SQL to use a default value. Then we lift all our other values with val_. Finally, we'll use insertExpressions instead of insertValues in our Haskell expression.

insertUsers :: Connection -> IO ()

insertUsers conn = runBeamSqlite conn $ runInsert $

insert (_blogUsers blogDb) $ insertExpressions [ user1' ]Then we'll have our auto-incrementing key!

Conclusion

That concludes our introduction to the Beam library. As we saw, Beam is a great library that lets you specify a database schema without using any Template Haskell. For more details, make sure to check out the documentation!

For a more in depth look at using Haskell libraries to make a web app, be sure to read our Haskell Web Series. It goes over some database mechanics as well as creating APIs and testing. As an added challenge, trying re-writing the code in that series to use Beam instead of Persistent. See how much of the Servant code needs to change to accommodate that.

And for more examples of cool libraries, download our Production Checklist! There are some more database and API libraries you can check out!

Appendix: Compiler Extensions

{-# LANGUAGE DeriveGeneric #-}

{-# LANGUAGE GADTs #-}

{-# LANGUAGE OverloadedStrings #-}

{-# LANGUAGE FlexibleContexts #-}

{-# LANGUAGE FlexibleInstances #-}

{-# LANGUAGE TypeFamilies #-}

{-# LANGUAGE TypeApplications #-}

{-# LANGUAGE StandaloneDeriving #-}

{-# LANGUAGE TypeSynonymInstances #-}

{-# LANGUAGE NoMonoMorphismRestriction #-}Deploying Confidently: Haskell and Circle CI

In last week’s article, we deployed our Haskell code to the cloud using Heroku. Our solution worked, but the process was also very basic and very manual. Let’s review the steps we would take to deploy code on a real project with this approach.

- Make a pull request against master branch

- Merge code into master

- Pull master locally, run tests

- Manually run

git push heroku master - Hope everything works fine on Heroku

This isn’t a great approach. Wherever there are manual steps in our development process, we’re likely to forget something. This will almost always come around to bite us at some point. In this article, we’ll see how we can automate our development workflow using Circle CI.

Getting Started with Circle

To follow along with this article, you should already have your project stored on Github. As soon as you have this, you can integrate with Circle easily. Go to the Circle Website and login with Github. Then go to “Add Project”. You should see all your personal repositories. Clicking your Haskell project should allow you to integrate the two services.

Now that Circle knows about our repository, it will try to build whenever we push code up to Github. But we have to tell Circle CI what to do once we’ve pushed our code! For this step, we’ll need to create a config file and store it as part of our repository. Note we’ll be using Version 2 of the Circle CI configuration. To define this configuration we first create a folder called .circleci at the root of our repository. Then we make a YAML file called config.yaml.

In Circle V2, we specify “workflows” for the Circle container to run through. To keep things simple, we’ll limit our actions to take place within a single workflow. We specify the workflows section at the bottom of our config:

workflows:

version: 2

build_and_test:

jobs:

- build_projectNow at the top, we’ll again specify version 2, and then lay out a bare-bones definition of our build_project job.

version: 2

jobs:

build_project:

machine: true

steps:

- checkout

- run: echo “Hello”The machine section indicates a default Circle machine image we’re using for our project. There’s no built-in Haskell machine configuration we can use, so we’re using a basic image. Then for our steps, we’ll first checkout our code, and then run a simple “echo” command. Let’s now consider how we can get this machine to get the Stack utility so we can actually go and build our code.

Installing Stack

So right now our Circle container has no Haskell tools. This means we'll need to do everything from scratch. This is a useful learning exercise. We’ll learn the minimal steps we need to take to build a Haskell project on a Linux box. Next week, we’ll see a shortcut we can use.

Luckily, the Stack tool handles most of our problems for us, but we first have to download it. So after checking our our code, we’ll run several different commands to install Stack. Here’s what they look like:

steps:

- checkout

- run: wget https://github.com/commercialhaskell/stack/releases/download/v1.6.1/stack-1.6.1-linux-x86_64.tar.gz -O /tmp/stack.tar.gz

- run: sudo mkdir /tmp/stack-download

- run: sudo tar -xzf /tmp/stack.tar.gz -C /tmp/stack-download

- run: sudo chmod +x /tmp/stack-download/stack-1.6.1-linux-x86_64/stack

- run: sudo mv /tmp/stack-download/stack-1.6.1-linux-x86_64/stack /usr/bin/stackThe wget command downloads Stack off Github. If you’re using a different version of Stack than we are (1.6.1), you’ll need to change the version numbers of course. We’ll then create a temporary directory to unzip the actual executable to. Then we use tar to perform the unzip step. This leaves us with the stack executable in the appropriate folder. We’ll give this executable x permissions, and then move it onto the machine’s path. Then we can use stack!

Building Our Project

Now we’ve done most of the hard work! From here, we’ll just use the Stack commands to make sure our code works. We’ll start by running stack setup. This will download whatever version of GHC our project needs. Then we’ll run the stack test command to make sure our code compiles and passes all our test suites.

steps:

- checkout

- run: wget …

...

- run: stack setup

- run: stack testNote that Circle expects our commands to finish with exit code 0. This means if any of them has a non-zero exit code, the build will be a “failure”. This includes our stack test step. Thus, if we push code that fails any of our tests, we’ll see it as a build failure! This spares us the extra steps of running our tests manually and “hoping” they’ll work on the environment we deploy to.

Caching

There is a pretty big weakness in this process right now. Every Circle container we make starts from scratch. Thus we’ll have to download GHC and all the different libraries our code depends on for every build. This means you might need to wait 30-60 minutes to see if your code passes depending on the size of your project! We don’t want this. So to make things faster, we’ll tell Circle to cache this information, since it won’t change on most builds. We’ll take the following two steps:

- Only download GHC when

stack.yamlchanges (since the LTS might have changed). This involves caching the~/.stackdirectory - Only re-download libraries when either

stack.yamlor our.cabalfile changes. For this, we’ll cache the.stack-worklibrary.

For each of these, we’ll make an appropriate cache key. At the start of our build process, we’ll attempt to restore these directories from the cache based on particular keys. As part of each key, we’ll use a checksum of the relevant file.

steps:

- checkout

- restore-cache:

keys:

- stack-{{ checksum “stack.yaml” }}

- restore-cache:

keys:

- stack-{{checksum “stack.yaml”}}-{{checksum “project.cabal”}}If these files change, the checksum will be different, so Circle won’t be able to restore the directories. Then our other steps will run in full, downloading all the relevant information. At the end of the process, we want to then make sure we’ve saved these directories under the same key. We do this with the save_cache command:

steps:

…

- stack test

- save-cache:

key: stack-{{ checksum “stack.yaml” }}

paths:

- “~/.stack”

- restore-cache:

keys: stack-{{checksum “stack.yaml”}}-{{checksum “project.cabal”}}

paths:

- “.stack-work”Now the next builds won’t take as long! There are other ways we can make our cache keys. For instance, we could use the Stack LTS as part of the key, and bump this every time we change which LTS we’re using. The downside is that there’s a little more manual work required. But this work won’t happen too often. The positive side is that we won’t need to re-download GHC when we add extra dependencies to stack.yaml.

Deploying to Heroku

Last but not least, we’ll want to actually deploy our code to heroku every time we push to the master branch. Heroku makes it very easy for us to do this! First, go to the app dashboard for Heroku. Then find the Deploy tab. You should see an option to connect with Github. Use it to connect your repository. Then make sure you check the box that indicates Heroku should wait for CI. Now, whenever your build successfully completes, your code will get pushed to Heroku!

Conclusion

You might have noticed that there’s some redundancy with our approaches now! Our Circle CI container will build the code. Then our Heroku container will also build the code! This is very inefficient, and it can lead to deployment problems down the line. Next week, we’ll see how we can use Docker in this process. Docker fully integrates with Circle V2. It will simplify our Circle config definition. It will also spare us from needing to rebuild all our code on Heroku again!

With all these tools at your disposal, it’s time to finally build that Haskell app you always wanted to! Download our Production Checklist to learn some cool libraries you can use!

If you’ve never programmed in Haskell before, hopefully you can see that it’s not too difficult to use! Download our Haskell Beginner’s Checklist and get started!

For All the World to See: Deploying Haskell with Heroku

In several different articles now, we’ve explored how to build web apps using Haskell. See for instance, our Haskell Web Series and our API integrations series. But all this is meaningless in the end if we don’t have a way to deploy our code so that other people on the internet can find it! In this next series, we’ll explore how we can use common services to deploy Haskell code. It’ll involve a few more steps than code in more well-supported languages!

If you’ve never programmed in Haskell at all, you’ve got a few things to learn before you start deploying code! Download our Beginners Checklist for tips on how to start learning! But maybe you’ve done some Haskell already, and need some more ideas for libraries to use. In that case, take a look at our Production Checklist for guidance!

Deploying Code on Heroku

In this article, we’re going to focus on using the Heroku service to deploy our code. Heroku allows us to do this with ease. We can get a quick prototype out for free, making it ideal for Hackathons. Like most platforms though, Heroku is easiest to use with more common languages. Heroku can automatically detect Javascript or Python apps and take the proper steps. Since Haskell isn’t used as much, we’ll need one extra specification to get Heroku support. Luckily, most of the hard work is already done for us.

Buildpacks

Heroku uses the concept of a “buildpack” to determine how to turn your project into runnable code. You’ll deploy your app by pushing your code to a remote repository. Then the buildpack will tell Heroku how to construct the executables you need. If you specify a Node.js project, Heroku will find your package.json file and download everything from NPM. If it’s Python, Heroku will install pip and do the same thing.

Heroku does not have any default buildpacks for Haskell projects. However, there is a buildpack on Github we can use (star this repository!). It will tell our Heroku container to download Stack, and then use Stack to build all our executables. So let’s see how we can build a rudimentary Haskell project using this process.

Creating Our Application

We’ll need to start by making a free account on Heroku. Then we’ll download the Heroku CLI so we can connect from the terminal. Use the heroku login command and enter your credentials.

Now we want to create our application. In your terminal, cd into the directory that has your Haskell Stack project. Make sure it’s also a Github repository already. It’s fine if the repository is only local for now. Run this command to create your application (replace haskell-test-app with your desired app name):

heroku create haskell-test-app \

-b https://github.com/mfine/heroku-buildpack-stackThe -b argument specifies our buildpack. We'll pull it from the specified Github repository. If this works, you should be able to go to your Heroku dashboard and see an entry for your new application. You’ll have a Heroku domain for your project that you can see on project settings.

Now we need to make a Procfile. This tells Heroku the specific binary we need to run to start our web server. Make sure you have an executable in your .cabal file that starts up the server. Then in the Procfile, you’ll specify that executable under the web name:

web: run-serverNote though that you can’t use a hard-coded port! Heroku will choose a port for you. You can get it by retrieving the PORT environment variable. Here’s what your code might look like:

runServer :: IO ()

runServer = do

port <- read <$> getEnv “PORT”

Run port (serve myAPI myServer)Now you’ll need to “scale” the application to make sure it has at least a single machine to run on. From your repository, run the command:

heroku ps:scale web=1Finally, we need to push our application to the Heroku container. To do this, make sure Heroku added the remote heroku Github repository. You can do this with the following command:

git remote -vIt should show you two remotes named heroku, one for fetch, and one for push. If those don’t exist, you can add them like so:

heroku git:remote -a haskell-test-appThen you can finish up by running this command:

git push heroku masterYou should see terminal output indicating that Heroku recognizes your application. If you wait long enough, you'll start to see the Stack build process. If you have any environment variables for your project, set them from the app dashboard. You can also set variables with the following command:

heroku config:set VAR_NAME=var_valueOnce our app finishes building, you can visit the URL Heroku gives you. It should look like https://your-app.herokuapp.com. You’ve now deployed your Haskell code to the cloud!

Weaknesses

There are a few weaknesses to this system. The main one is that our entire build process takes place on the cloud. This might seem like an advantage, and it has its perks. Haskell applications can take a LONG time to compile though. This is especially true if the project is large and involves Template Haskell. Services like Heroku often have timeouts on their build process. So if compilation takes too long, the build will fail. Luckily, the containers will cache previous results. This means Stack won't have to keep re-downloading all the libraries. So even if our first build times out, the second might succeed.

Conclusion

This concludes part 1 of our Haskell Deployment series. We’ll see the same themes quite a bit throughout this series. It’s definitely possible to deploy our Haskell code using common services. But we often have to do a little bit more work to do so. Next week we’ll see how we can automate our deployment process with Circle CI.

Want some more tips on developing web applications with Haskell? Download our Production Checklist to learn about some other libraries you can use! For a more detailed explanation of one approach, read our Haskell Web Skills series.

Connecting to Mailchimp...from Scratch!

Welcome to the third and final article in our series on Haskell API integrations! We started this series off by learning how to send and receive text messages using Twilio. Then we learned how to send emails using the Mailgun service. Both of these involved applying existing Haskell libraries suited to the tasks. This week, we’ll learn how to connect with Mailchimp, a service for managing email subscribers. Only this time, we’re going to do it a bit differently.

There are a couple different Haskell libraries out there for Mailchimp. But we’re not going to use them! Instead, we’ll learn how we can use Servant to connect directly to the API. This should give us some understanding for how to write one of these libraries. It should also make us more confident of integrating with any API of our choosing!

To follow along the code for this article, checkout the mailchimp branch on Github! It’ll show you all the imports and compiler extensions you need!

The topics in this article are quite advanced. If any of it seems crazy confusing, there are plenty of easier resources for you to start off with!

- If you’ve never written Haskell at all, see our Beginners Checklist to learn how to get started!

- If you want to learn more about the Servant library we’ll be using, check out my talk from BayHac 2017 and download the slides and companion code.

- Our Production Checklist has some further resources and libraries you can look at for common tasks like writing web APIs!

Mailchimp 101

Now let’s get going! To integrate with Mailchimp, you first need to make an account and create a mailing list! This is pretty straightforward, and you'll want to save 3 pieces of information. First is base URL for the Mailchimp API. It will look like

https://{server}.api.mailchimp.com/3.0Where {server} should be replaced by the region that appears in the URL when you log into your account. For instance, mine is: https://us14.api.mailchimp.com/3.0. You’ll also need your API Key, which appears in the “Extras” section under your account profile. Then you’ll also want to save the name of the mailing list you made.

Our 3 Tasks

We’ll be trying to perform three tasks using the API. First, we want to derive the internal “List ID” of our particular Mailchimp list. We can do this by analyzing the results of calling the endpoint at:

GET {base-url}/listsIt will give us all the information we need about our different mailing lists.

Once we have the list ID, we can use that to perform actions on that list. We can for instance retrieve all the information about the list’s subscribers by using:

GET {base-url}/lists/{list-id}/membersWe’ll add an extra count param to this, as otherwise we'll only see the results for 10 users:

GET {base-url}/lists/{list-id}/members?count=2000Finally, we’ll use this same basic resource to subscribe a user to our list. This involves a POST request and a request body containing the user’s email address. Note that all requests and responses will be in the JSON format:

POST {base-url}/lists/{list-id}/members

{

“email_address”: “person@email.com”,

“status”: “subscribed”

}On top of these endpoints, we’ll also need to add basic authentication to every API call. This is where our API key comes in. Basic auth requires us to provides a “username” and “password” with every API request. Mailchimp doesn’t care what we provide as the username. As long as we provide the API key as the password, we’ll be good. Servant will make it easy for us to do this.

Types and Instances

Once we have the structure of the API down, our next goal is to define wrapper types. These will allow us to serialize our data into the format demanded by the Mailchimp API. We’ll have four different newtypes. The first will represent a single email list in a response object. All we care about is the list name and its ID, which we represent with Text:

newtype MailchimpSingleList = MailchimpSingleList (Text, Text)

deriving (Show)Now we want to be able to deserialize a response containing many different lists:

newtype MailchimpListResponse =

MailchimpListResponse [MailchimpSingleList]

deriving (Show)In a similar way, we want to represent a single subscriber and a response containing several subscribers:

newtype MailchimpSubscriber = MailchimpSubscriber

{ unMailchimpSubscriber :: Text }

deriving (Show)

newtype MailchimpMembersResponse =

MailchimpMembersResponse [MailchimpSubscriber]

deriving (Show)The purpose of using these newtypes is so we can define JSON instances for them. In general, we only need FromJSON instances so we can deserialize the response we get back from the API. Here’s what our different instances look like:

instance FromJSON MailchimpSingleList where

parseJSON = withObject "MailchimpSingleList" $ \o -> do

name <- o .: "name"

id_ <- o .: "id"

return $ MailchimpSingleList (name, id_)

instance FromJSON MailchimpListResponse where

parseJSON = withObject "MailchimpListResponse" $ \o -> do

lists <- o .: "lists"

MailchimpListResponse <$> forM lists parseJSON

instance FromJSON MailchimpSubscriber where

parseJSON = withObject "MailchimpSubscriber" $ \o -> do

email <- o .: "email_address"

return $ MailchimpSubscriber email

instance FromJSON MailchimpListResponse where

parseJSON = withObject "MailchimpListResponse" $ \o -> do

lists <- o .: "lists"

MailchimpListResponse <$> forM lists parseJSONAnd last, we need a ToJSON instance for our individual subscriber type. This is because we’ll be sending that as a POST request body:

instance ToJSON MailchimpSubscriber where

toJSON (MailchimpSubscriber email) = object

[ "email_address" .= email

, "status" .= ("subscribed" :: Text)

]Defining a Server Type

Now that we've defined our types, we can go ahead and define our actual API using Servant. This might seem a little confusing. After all, we’re not building a Mailchimp Server! But by writing this API, we can use the client function from the servant-client library. This will derive all the client functions we need to call into the Mailchimp API. Let’s start by defining a combinator that will description our authentication format using BasicAuth. Since we aren’t writing any server code, we don’t need a “return” type for our authentication.

type MCAuth = BasicAuth "mailchimp" ()Now let’s write the lists endpoint. It has the authentication, our string path, and then returns us our list response.

type MailchimpAPI =

MCAuth :> “lists” :> Get ‘[JSON] MailchimpListResponse :<|>

...For our next endpoint, we need to capture the list ID as a parameter. Then we’ll add the extra query parameter related to “count”. It will return us the members in our list.

type Mailchimp API =

…

MCAuth :> “lists” :> Capture “list-id” Text :>

QueryParam “count” Int :> Get ‘[JSON] MailchimpMembersResponseFinally, we need the “subscribe” endpoint. This will look like our last endpoint, except without the count parameter and as a post request. Then we’ll include a single subscriber in the request body.

type Mailchimp API =

…

MCAuth :> “lists” :> Capture “list-id” Text :>

ReqBody ‘[JSON] MailchimpSubscriber :> Post ‘[JSON] ()

mailchimpApi :: Proxy MailchimpApi

mailchimpApi = Proxy :: Proxy MailchimpApiNow with servant-client, it’s very easy to derive the client functions for these endpoints. We define the type signatures and use client. Note how the type signatures line up with the parameters that we expect based on the endpoint definitions. Each endpoint takes the BasicAuthData type. This contains a username and password for authenticating the request.

fetchListsClient :: BasicAuthData -> ClientM MailchimpListResponse

fetchSubscribersClient :: BasicAuthData -> Text -> Maybe Int

-> ClientM MailchimpMembersResponse

subscribeNewUserClient :: BasicAuthData -> Text -> MailchimpSubscriber

-> ClientM ()

( fetchListsClient :<|>

fetchSubscribersClient :<|>

subscribeNewUserClient) = client mailchimpApiRunning Our Client Functions

Now let’s write some helper functions so we can call these functions from the IO monad. Here’s a generic function that will take one of our endpoints and call it using Servant’s runClientM mechanism.

runMailchimp :: (BasicAuthData -> ClientM a) -> IO (Either ServantError a)

runMailchimp action = do

baseUrl <- getEnv "MAILCHIMP_BASE_URL"

apiKey <- getEnv "MAILCHIMP_API_KEY"

trueUrl <- parseBaseUrl baseUrl

let userData = BasicAuthData "username" (pack apiKey)

manager <- newTlsManager

let clientEnv = ClientEnv manager trueUrl

runClientM (action userData) clientEnvFirst we derive our environment variables and get a network connection manager. Then we run the client action against the ClientEnv. Not too difficult.

Now we’ll write a function that will take a list name, query the API for all our lists, and give us the list ID for that name. It will return an Either value since the client call might actually fail. It calls our list client and filters through the results until it finds a list whose name matches. We’ll return an error value if the list isn’t found.

fetchMCListId :: Text -> IO (Either String Text)

fetchMCListId listName = do

listsResponse <- runMailchimp fetchListsClient

case listsResponse of

Left err -> return $ Left (show err)

Right (MailchimpListResponse lists) ->

case find nameMatches lists of

Nothing -> return $ Left "Couldn't find list with that name!"

Just (MailchimpSingleList (_, id_)) -> return $ Right id_

where

nameMatches :: MailchimpSingleList -> Bool

nameMatches (MailchimpSingleList (name, _)) = name == listNameOur function for retrieving the subscribers for a particular list is more straightforward. We make the client call and either return the error or else unwrap the subscriber emails and return them.

fetchMCListMembers :: Text -> IO (Either String [Text])

fetchMCListMembers listId = do

membersResponse <- runMailchimp

(\auth -> fetchSubscribersClient auth listId (Just 2000))

case membersResponse of

Left err -> return $ Left (show err)

Right (MailchimpMembersResponse subs) -> return $

Right (map unMailchimpSubscriber subs)And our subscribe function looks very similar. We wrap the email up in the MailchimpSubscriber type and then we make the client call using runMailchimp.

subscribeMCMember :: Text -> Text -> IO (Either String ())

subscribeMCMember listId email = do

subscribeResponse <- runMailchimp (\auth ->

subscribeNewUserClient auth listId (MailchimpSubscriber email))

case subscribeResponse of

Left err -> return $ Left (show err)

Right _ -> return $ Right ()The SubscriberList Effect

Since the rest of our server uses Eff, let’s add an effect type for our subscription list. This will help abstract away the Mailchimp details. We’ll call this effect SubscriberList, and it will have a constructor for each of our three actions:

data SubscriberList a where

FetchListId :: SubscriberList (Either String Text)

FetchListMembers ::

Text -> SubscriberList (Either String [Subscriber])

SubscribeUser ::

Text -> Subscriber -> SubscriberList (Either String ())

fetchListId :: (Member SubscriberList r) => Eff r (Either String Text)

fetchListId = send FetchListId

fetchListMembers :: (Member SubscriberList r) =>

Text -> Eff r (Either String [Subscriber])

fetchListMembers listId = send (FetchListMembers listId)

subscribeUser :: (Member SubscriberList r) =>

Text -> Subscriber -> Eff r (Either String ())

subscribeUser listId subscriber =

send (SubscribeUser listId subscriber)Note we use our wrapper type Subscriber from the schema.

To complete the puzzle, we need a function to convert this action into IO. Like all our different transformations, we use runNat on a natural transformation:

runSubscriberList :: (Member IO r) =>

Eff (SubscriberList ': r) a -> Eff r a

runSubscriberList = runNat subscriberListToIO

where

subscriberListToIO :: SubscriberList a -> IO a

...Now for each constructor, we’ll call into the helper functions we wrote above. We’ll add a little bit of extra logic that’s going to handle unwrapping the Mailchimp specific types we used and some error handling.

runSubscriberList :: (Member IO r) =>

Eff (SubscriberList ': r) a -> Eff r a

runSubscriberList = runNat subscriberListToIO

where

subscriberListToIO :: SubscriberList a -> IO a

subscriberListToIO FetchListId = do

listName <- pack <$> getEnv "MAILCHIMP_LIST_NAME"

fetchMCListId listName

subscriberListToIO (FetchListMembers listId) = do

membersEither <- fetchMCListMembers listId

case membersEither of

Left e -> return $ Left e

Right emails -> return $ Right (Subscriber <$> emails)

subscriberListToIO (SubscribeUser listId (Subscriber email)) =

subscribeMCMember listId emailModifying the Server

The last step of this process is to incorporate the new effects into our server. Our aim is to replace the simplistic Database effect we were using before. This is a snap. We’ll start by substituting our SubscriberList into the natural transformation used by Servant:

transformToHandler ::

(Eff '[SubscriberList, Email, SMS, IO]) :~> Handler

transformToHandler = NT $ \action -> do

let ioAct = runM $ runTwilio (runEmail (runSubscriberList action))

liftIO ioActWe now need to change our other server functions to use the new effects. In both cases, we’ll need to first fetch the list ID, handle the failure, and we can then proceed with the other operation. Here’s how we subscribe a new user:

subscribeHandler :: (Member SubscriberList r) => Text -> Eff r ()

subscribeHandler email = do

listId <- fetchListId

case listId of

Left _ -> error "Failed to find list ID!"

Right listId' -> do

_ <- subscribeUser listId' (Subscriber email)

return ()Finally, we send an email like so, combining last week’s Email effect with the SubscriberList effect we just created:

emailList :: (Member SubscriberList r, Member Email r) =>

(Text, ByteString, Maybe ByteString) -> Eff r ()

emailList content = do

listId <- fetchListId

case listId of

Left _ -> error "Failed to find list ID!"

Right listId' -> do

subscribers <- fetchListMembers listId'

case subscribers of

Left _ -> error "Failed to find subscribers!"

Right subscribers' -> do

_ <- sendEmailToList

content (subscriberEmail <$> subscribers')

return ()Conclusion

That wraps up our exploration of Mailchimp and our series on integrating APIs with Haskell! In part 1 of this series, we saw how to send and receive texts using the Twilio API. Then in part 2, we sent emails to our users with Mailgun. Finally, we used the Mailchimp API to more reliably store our list of subscribers. We even did this from scratch, without the use of a library like we had for the other two effects. We used Servant to great effect here, specifying what our API would look like even though we weren’t writing a server for it! This enabled us to derive client functions that could call the API for us.

This series combined tons of complex ideas from many other topics. If you were a little lost trying to keep track of everything, I highly recommend you check out our Haskell Web Skills series. It’ll teach you a lot of cool techniques, such as how to connect Haskell to a database and set up a server with Servant. You should also download our Production Checklist for some more ideas about cool libraries!

And of course, if you’re a total beginner at Haskell, hopefully you understand now that Haskell CAN be used for some very advanced functionality. Furthermore, we can do so with incredibly elegant solutions that separate our effects very nicely. If you’re interested in learning more about the language, download our free Beginners Checklist!

Mailing it out with Mailgun!

Last week, we started our exploration of the world of APIs by integrating Haskell with Twilio. We were able to send a basic SMS message, and then create a server that could respond to a user’s message. This week, we’re going to venture into another type of effect: sending emails. We’ll be using Mailgun for this task, along with the Hailgun Haskell API for it.

You can take a look at the full code for this article by looking at the mailgun branch on our Github repository. If this article sparks your curiosity for more Haskell libraries, you should download our Production Checklist!

Making an Account

To start with, we’ll need a mailgun account obviously. Signing up is free and straightforward. It will ask you for an email domain, but you don’t need one to get started. As long as you’re in testing mode, you can use a sandbox domain they provide to host your mail server.

With Twilio, we had to specify a “verified” phone number that we could message in testing mode. Similarly, you will also need to designate a verified email address. Your sandboxed domain will only be able to send to this address. You’ll also need to save a couple pieces of information about your Mailgun account. In particular, you need your API Key, the sandboxed email domain, and the reply address for your emails to use. Save these as environment variables on your local system and remote machine.

Basic Email

Now let’s get a feel for the Hailgun code by sending a basic email. All this occurs in the simple IO monad. We ultimately want to use the function sendEmail, which requires both a HailgunContext and a HailgunMessage:

sendEmail

:: HailgunContext

-> HailgunMessage

-> IO (Either HailgunErrorResponse HailgunSendResponse)We’ll start by retrieving our environment variables. With our domain and API key, we can build the HailgunContext we’ll need to pass as an argument.